Well, the biggest information is that hot-backups on slave work. And they work fine. Really fine.

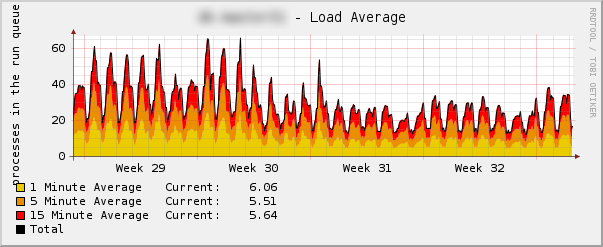

Some more information (with nice graph!):

Background: hot backup is backup of database server, done with backing up data files, and not issuing pg_dump. There are certain benefits of doing it – for example the fact that if you'd also backup wal segments someplace, you can restore database state up to last segment rotation, and not only to last backup time!

So, we have this really beefy server. When I say beefy, I do mean it – I cannot give details, so just please trust me that it's really, really powerful.

This server, is working as main DB server for some website, and as WAL-Replication master for secondary server (slave).

Using OmniPITR, we do send wal segments to slave, and also to backup server – server which just stores hot backups (done daily) and wal segments (both kept couple of days). Database is ~ 350GB.

Because usually you can make hot backups only on master, that's how we've been doing it. But since OmniPITR can make hot backups on slave server – we tried it. After running both backups for some time, and daily testing if the slave backup is working, we switched off hot backup on master.

Result:

Switching off happened on 1st day of 31st week (after hot backup on this day started), so since 2nd day of 31st week, we no longer have backups from master. Load decreased very nicely – which is kind of obvious, because we just removed necessity to make 100+GB tar.gz files on it, on daily basis!

These backups (taken from slave) are tested now weekly by automatic procedure, and they work just fine.

What's more – because slave has lower load than master, backups take less time than they did before, which in turn means that they are smaller (backup has to contain all wal segments that appeared during compression of $PGDATA). All in all – great stuff.

Next steps for OmniPITR – I'm working on documentation on how it internally works, why some design choices were made, and what are unexpected side effects of using various functionalities of OmniPITR (for example: usage of compressed destination on slave server, increases disk space usage when making hot backup on slave).

As soon as this documentation will be ready, I will move on to writing omnipitr-monitor (program/script that will be used from cacti/nagios – type of tools, to monitor and plot graphs). Afterwards – we will stamp version 1.0, and move on to (already existing) todo (if you're curious – it's in doc/todo.pod in OmniPITR distribution

not better rsync the data off to another server, and tar it up there ? That’s the way we do it, and that has even lesser load on the servers.

@GregJ:

rsync from master or from slave? Anyway – if you don’t shutdown the server for the time of rsync you might get not working copy.

@depesz: I thought it’s possible to do a hot backup, when I issue a pg_start_backup() and then do a rsync? Instead of shutting it down

@Thomas:

Absolutely, it’s possible. But: you have to do it on master (which we don’t want as it generates non-trivial load), and you have to have wal archiving enabled (which is pretty normal, and not a big deal, but you have to remember about it).

The reason this is so awesome is that you don’t damage the buffer cache on the master. Postgres relies heavily on keeping the hot portions of the database in the filesystem buffer cache (or ARC on ZFS). An rsync has to read all the bytes in a while which is enormously damaging to performance on highly loaded systems where hot cache hits keep things performant.

Even with BLI (block level incremental) backups, this can be a problem. Doing this stuff on the slave is pure awesome.

that’s what the hot copy is for, you can do it with rsync. I do it, and it works. So it must be more than luck.

And that gives you plenty of speedup, just do it with rsync -avzP

@GJ:

You’re doing copy of master? Did you issue pg_start_backup() before?

if you did issue pg_start_backup() before than it’s normal way of making hot backup.

The biggest problem here is that it works on master – thus generating load, and thrashing disk buffers.

If you are doing it on slave – it’s definitely possible, but doing just plain rsync will have non 0 chance of making backup that doesn’t work.